If you’re side-eyeing the title, thinking “are humans programmable” sounds suspiciously like another one of those “Productivity Hacks” or “Manifest Your Reality” pieces, rest assured.

This piece is neither spiritual in the metaphysical sense, nor coldly rational in a boring “brain-hacks” fashion.

Instead, it’s a note on a clear pattern we can all observe in our species’ behavior. One that operates at a far more foundational level.

What if you could stop fighting yourself to achieve your goals, and just become someone who would’ve done so anyway? Not out of sudden bursts of motivation, but because it’s who you truly are.

Before we begin, here’s a fair warning. This article asks you to accept a few principles.

Not beliefs—principles:

- Are you a complex system with behaviors that have emerged to optimize your interaction with your environment?

- Have you been given clear logical rules and directives that have enabled you to learn and grow exponentially over time?

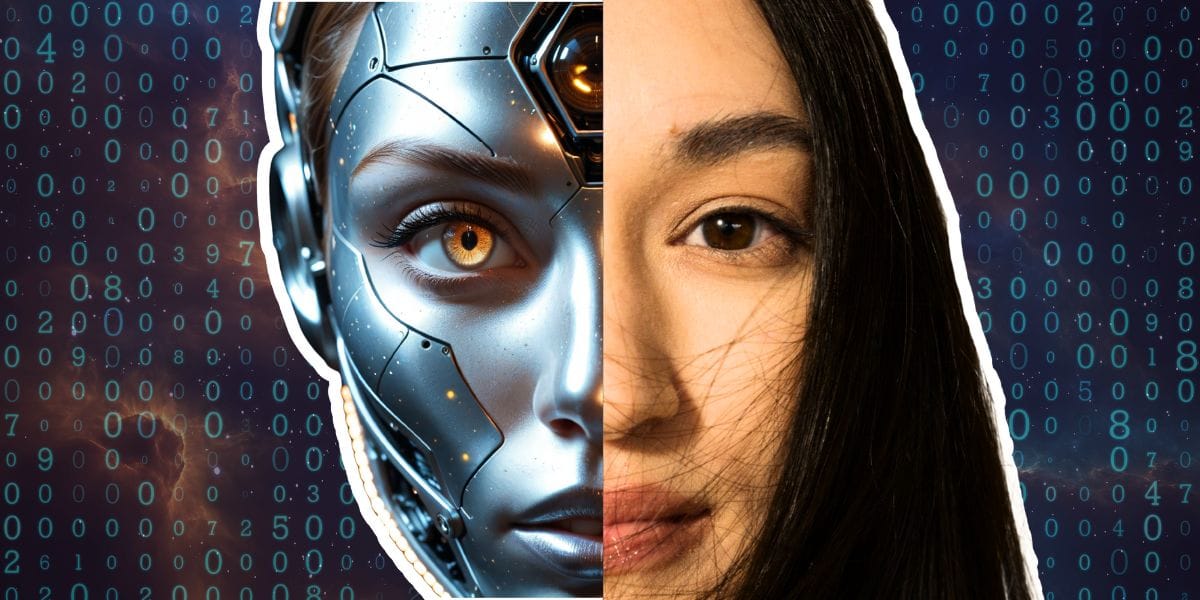

If you answered ‘yes,’ you may be more similar to AI than most are comfortable admitting.

And there’s a good reason for that.

How Does AI Learn?

We built machine-learning systems using the same structural logic observed in reality: that explicit instruction breaks down in complex environments, and adaptive systems trained on feedback outperform rigid rule-based ones.

Machine-learning.

So what does that actually mean?

The term has been defined by IBM:

The use and development of computer systems that are able to learn and adapt without following explicit instructions, by using algorithms and statistical models to analyze and draw inferences from patterns in data.

The key phrase here is: “Without following explicit instructions”.

Machine Learning vs. humanOS

In layman’s terms, AI learns and adapts when it’s given algorithms (logical rules) and inputs (training data). This then allows it to make decisions or predictions without being programmed for every given scenario.

With sufficient, relevant data and feedback, its predictions become increasingly reliable.

If we know this is the best way for a complex system to learn, then why do we—also complex systems—consciously try to force ourselves to learn the material required for growth, like a script that will inevitably fail when placed in any real-life scenario?

Instead of teaching ourselves skills, facts, and habits in this simple manner, we try to jam textbooks, motivational quotes, and unachievable schedules into an OS that was never designed for that kind of rigidity.

And when this fails, we tend to conclude that the problem is personal.

It begs a question that would make even the strictest materialist quiver at the knees:

Are humans programmable?

Now, instead of going down a sci-fi rabbit hole and conjuring up conspiracy theories that would have Bill Kaysing rolling in his grave, let’s take a milder, more logical approach.

The question isn’t whether this can be used, but who understands it first.

The Pioneers of Self-Programming

This concept isn’t new. Not even remotely.

However, it’s been primarily intuitive—rarely framed in an intentional way. And defining this formula effectively may mark the difference between routine output and original work.

For instance, George Lucas’ immersion in mythology, history, and anthropological frameworks may have been natural interests of his, but they were key in developing the unique framing of good versus evil that marked his films.

Christopher Nolan’s fascination with physics, time, and music shows up not only in the themes of his films, but in their structure. He’s described using a visual analogue of the Shepard tone (a musical technique)—intercutting storylines so that as one reaches its peak, another is still building. This creates the illusion of perpetual escalation, a structural approach he’s returned to repeatedly.

This approach is not exclusive to filmmakers. Steve Jobs’ exposure to Eastern aesthetics, typography, and music shaped Apple’s design philosophy, and these influences later resulted in products like the iPod and iMac.

The pattern is easy to miss, but consistent.

The method I’m proposing is not about adopting an ‘information diet’ and cutting out everything unrelated to your field—it’s almost the opposite.

Instead, center your field or objective as the primary category, and branch out often—always with the general goal of reconnecting other fields to your own.

This is where innovation lives.

What Does This Look Like in Practice?

Here’s the uncomfortable truth: no system develops expertise in an environment where the main objective is drowned out by entirely unrelated topics.

Mastery rarely emerges from brief, isolated practice. Instead, it follows periods of deep immersion—where one subject becomes the dominant reference frame. Attention, curiosity, and interpretation must be repeatedly drawn back to the same set of problems.

Most people spend the majority of their waking hours exposed to content selected for them, another significant portion fulfilling obligations, and only a fraction engaging deliberately with the skill they hope to master.

In this context, the absence of progress isn’t mysterious. It’s predictable.

What changes outcomes isn’t discipline or motivation, but relevance. When the majority of one’s inputs (media, conversation, observation) begin to orbit a central objective, behavior reorganizes without resistance.

The system doesn’t need to be forced. It adapts automatically to what it is consistently exposed to.

That’s the advantage of curation. No motivation or discipline needed—only redirection.

Case Study: My Writing Projects

On a smaller, more grounded scale, here’s what ‘self-programming’ looks like.

Whenever I’m writing a new story, immersion is inevitable—because just one story can generate endless new questions, loose ends, and conflicts. And my mind has been trained over time to look everywhere for the answers.

I don’t consider myself a disciplined person, and traditional methods have never worked for me. Curation isn’t just a ‘cool method’ for me—it’s the only approach that reliably shapes my behavior at a foundational level.

My mind can’t produce what it doesn’t consume. So the material I consume tends to align with the story itself: video biographies of authors working in similar narrative spaces, writing theory related to the genre I’m refining, series and films that share the same structural or thematic DNA as my story. Even the articles I’ve been writing have been related to either my process or the themes I’m handling.

If I’m consuming content all day regardless, I may as well ensure it’s all aligned with my objectives.

As for my digital environment, I’ve followed scientific, AI, philosophy, and writing-related accounts, and all of this readily-available content actively feeds the story instead of directing me off course.

I’m not fully immersed because I’m forcing discipline. The environment has simply been arranged so that story development becomes the natural outcome.

I eliminated nothing. I simply reorganized my inputs so they all point toward the same objective.

The Final Verdict: Are Humans Programmable?

We are logical systems that follow crystal clear learning mechanisms—not unlike those that allow machine learning systems to generalize.

Behavior, skill, and innovation don’t emerge from force or motivation, but from sustained exposure to aligned inputs. Change the environment, and the system adapts.

Once this is understood, learning stops feeling like resistance. Not because effort disappears, but because the system is no longer being trained against itself.

Nothing mystical is required. Only structure.

Leave a Reply